The Wrong AI Metric Is Winning

5 min read · strategy, ai, economics

Most AI ROI conversations start with the same screenshot: a dashboard, a cost line, and a token count.

Then the room asks the predictable questions:

Can we cut the prompt?

Can we switch models?

Can we cap usage?

There's a better way.

Token budgets feel like control because they are visible. But visibility is not value. Tokens measure consumption; they do not measure business progress. When a team confuses consumption with value, it starts winning the metric while losing the business.

The Proxy Problem

Every era picks a metric that feels "close enough" to the thing that matters. Then, slowly, the proxy becomes the product.

In media, minutes watched became the stand-in for satisfaction. Views were too cheap. Clicks were too easy to game. So the system moved deeper into behavior and asked a better question: how long did attention stay?

That was useful. It was also dangerous.

Once the proxy hardens, people start serving the proxy. Not the user. Not the business. The metric.

GenAI is now walking into the same trap. Tokens are our "minutes watched." They are a decent proxy for cost, but a terrible proxy for outcome.

The expert sees a token chart and thinks the economics are obvious. The builder asks a harder question: What, exactly, did the business get back?

The Real Bill

In most real deployments, the binding constraint is not token volume. It is verification.

Your AI bill is rarely the true bill. The true bill includes:

- The minutes a reviewer spends cleaning up weak output.

- The delay between generation and approval.

- The exceptions that bounce back into human queues.

- The trust erosion that makes teams stop using the system at all.

A model can look cheap on paper and still be expensive in practice. The model invoice is only one line item. The operational drag is the rest of the invoice.

Measure What The Business Can Bank

If you want real GenAI ROI, stop asking how many tokens you burned. Start asking: How many outcomes did we ship?

1. Define the Verified Work Unit (VWU)

You need a unit of value the business can actually cash. Not "messages" or "responses," but a Verified Work Unit.

A VWU is a completed, accepted, business-relevant outcome. Generated work is not value; accepted work is.

Support: A ticket resolved without escalation.

Sales: A meeting booked from an outbound lead.

Legal: A contract clause redlined and accepted.

2. Measure Time-to-Verified Outcome (TVO)

AI does not create value when the output appears on screen. It creates value when the output survives contact with reality. TVO is the elapsed time from request to usable action.

If TVO is measured in days, you don't have a model problem. You have an organization design problem.

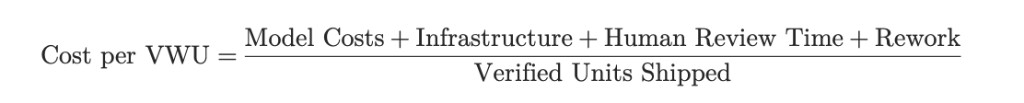

3. Calculate Cost Per VWU

Now the unit economics become honest.

That denominator is the truth serum. A bot that burns 2 million tokens but resolves 500 tickets cleanly is cheap. A "lightweight" bot that uses 200,000 tokens but creates 200 human escalations is expensive.

4. VWU Example

Example: Revenue Forecast Adjustment Review

Each week, the system drafts forecast changes by account, product line, or region. Finance reviews and approves them, and the supply chain uses the approved updates to adjust purchasing, inventory, and production plans.

AI-Assisted Workflow Cost

Model costs: $120

Infrastructure: $80

Human review: $600

Rework: $300

Total AI-assisted cost = $1,100

Current Workflow Cost

Planner time: $2,400

Finance review: $700

Rework and follow-up: $400

Total current cost = $3,500

The Unit Math

AI-assisted Cost per VWU = $1,100 / 100 adjustments = $11

Current Cost per VWU = $3,500 / 100 adjustments = $35

Savings per VWU = $35 - $11 = $24

The Result

Total Savings = $24 x 100 = $2,400

ROI = $2,400 / $1,100 = 218%

This takes the workflow from $35 to $11 per verified forecast adjustment: a 69% lower unit cost and 218% ROI.

A One-Week Implementation Plan

If you want to make this practical, do it in seven days:

Day 1: Pick one workflow with real volume (Support, SDR, Invoicing).

Day 2: Define the Verified Work Unit. Write down what "verified" means.

Day 3: Instrument Time-to-Verified Outcome. Track the timestamps.

Day 4: Track correction and exception rates. Measure edits per output.

Day 5: Build Cost per VWU. Include human review time.

Day 6: Run two variants. One optimized for cheap tokens; one optimized for fast, verified outcomes.

Day 7: Kill the weaker variant and publish the learning.

The Bottom Line

Token budgets are the new "minutes watched," from the network TV era. Useful, addictive, and easy to optimize, but misaligned.

The expert obsesses over the visible meter. A "Naive" first principles thinker keeps tokens as guardrails, then measures the only unit that matters: Verified Work Units shipped faster, with less correction.

That is where the economics get real.

Reflection Points

If your AI budget tripled tomorrow, what shipped outcome would you need to see, in Verified Work Units and Time-to-Verified Outcome, to call it a win?

What AI productivity gains can you verify today?